JTH

TSP Legend

- Reaction score

- 1,357

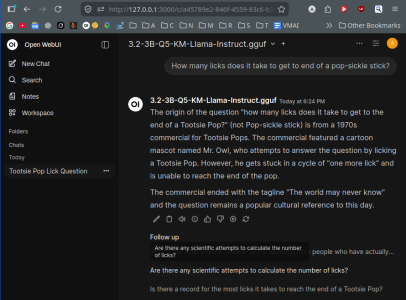

I've been going down numerous rabbit holes, testing Llama AI models, running it in a VM, I'm losing about 10-15% overhead on resources, but we are still in beta and I don't want to alter the HOST system.

It started with the idea I wanted an Internet in a box type of Cyberdeck (fully offline devise) for when the world ends.

You can download all the WIKI, and of course all other sorts of documents you deem relevant to rebuilding the world.

I can then build an extensive library, using purpose built AI models to sift through it when I need quick indexing or answers.

If you have the right data and models, you can build advisors, like a Doctor, Farmer, etc

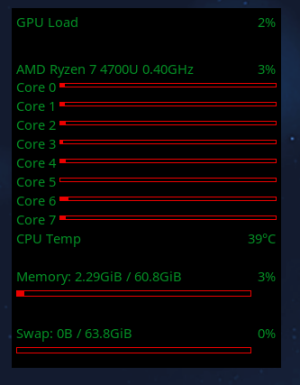

Anyhow, I rebuilt the Laptop, due to needing one large partition to store models and databases in one space. An 8-core AMD Ryzen 7 4700U with 16GB of ram, and 500GB storage (without a dedicated GPU), can run Small" Llama 3 8B Instruct models if they are heavily quantized. This is about the equivalent of Chat GPT-3.5 but with about half the context memory, so I can run similar (but shorter) task.

It started with the idea I wanted an Internet in a box type of Cyberdeck (fully offline devise) for when the world ends.

You can download all the WIKI, and of course all other sorts of documents you deem relevant to rebuilding the world.

I can then build an extensive library, using purpose built AI models to sift through it when I need quick indexing or answers.

If you have the right data and models, you can build advisors, like a Doctor, Farmer, etc

Anyhow, I rebuilt the Laptop, due to needing one large partition to store models and databases in one space. An 8-core AMD Ryzen 7 4700U with 16GB of ram, and 500GB storage (without a dedicated GPU), can run Small" Llama 3 8B Instruct models if they are heavily quantized. This is about the equivalent of Chat GPT-3.5 but with about half the context memory, so I can run similar (but shorter) task.

Last edited: